India AI Impact Summit 2026 offered something rare in the global AI conversation: a blueprint that begins not with the algorithm, but with the human.

The word manav means ‘human‘ or ‘mankind‘ in Sanskrit. It is a deliberately chosen ancient word that carries the weight of a civilisation that has long grappled with questions of dignity, duty, and collective welfare. When India’s AI Impact Summit 2026 unveiled the MANAV Vision as its central framework for AI governance, it was making a philosophical statement as much as a policy one: that the future of artificial intelligence must be measured not in benchmark scores or market capitalisation, but in the quality of life it delivers to those who have, until now, been left at the periphery of the digital age.

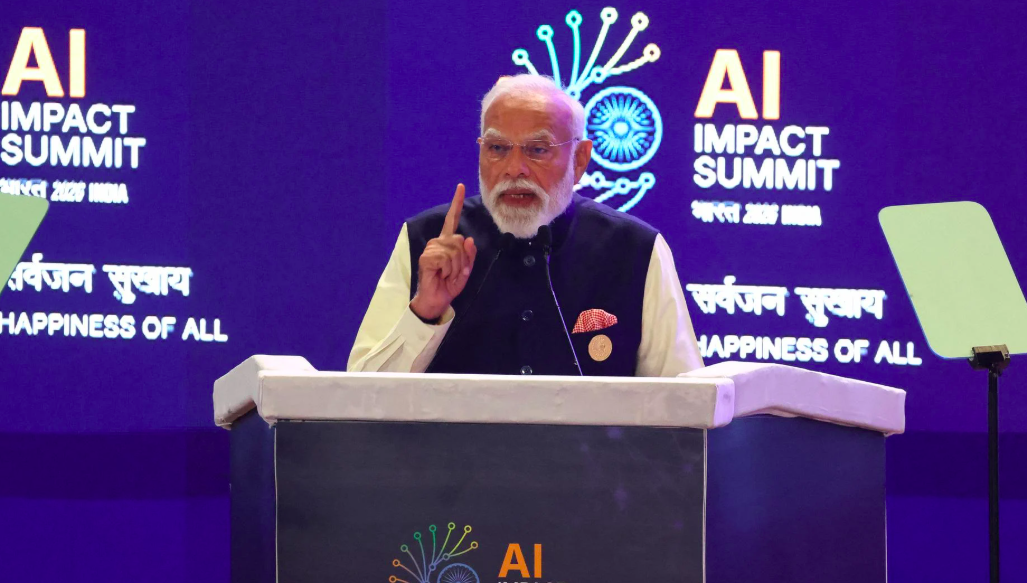

The summit, held in New Delhi, positioned itself as a global stage, and in many ways, it succeeded. Against a backdrop of ongoing debates in Geneva, Brussels, and Washington about regulation, liability, and existential risk, the MANAV framework offered something different: a vision of AI as a tool that amplifies what ordinary people can do, rather than one that concentrates wealth and capability in the hands of a few.

Five pillars anchor the vision:

- M – Moral and Ethical Systems: Guiding AI through established cultural and ethical norms.

- A – Accountable Governance: Moving from ‘black box’ decisions to transparent rules and audits.

- N – National Sovereignty: Protecting the principle of “Whose data, their right,” ensuring that digital assets remain under the control of those who generate them.

- A – Accessible and Inclusive: Breaking the ‘AI monopoly’ by designing for users who don’t fit the ‘ideal template’ of high literacy and stable connectivity.

- V – Valid and Legitimate: Ensuring systems are verifiable and lawful before they are deployed at scale.

Together, these form a governance architecture built not for the ‘ideal user‘, but for the billions of people who don’t fit the template that most AI systems were designed around.

From data points to ‘energy agents‘

Perhaps no single story from the summit captured the spirit of the MANAV vision more vividly than the rollout of a Peer-to-Peer (P2P) energy trading platform. A farmer in Meerut, equipped with rooftop solar panels and a smartphone, sold surplus electricity directly to a shopkeeper in Delhi through a blockchain-enabled AI platform. No utility company intermediary. No bureaucratic bottleneck. Just two citizens, a smart contract, and a transaction that made economic sense for both of them.

This is what the summit’s architects called ‘humanism in practice‘. The AI system in this case did not only automate a task or optimise a supply chain in the background. It transformed the farmer from a passive recipient of agricultural subsidies into an active economic agent: a producer, a trader, a participant in the energy market. The language used at the summit was precise: the farmer became an energy agent. That framing matters. It signals a shift in how we think about the relationship between citizens and technology, away from the paternalistic model in which systems are deployed at people, and toward one in which systems are designed with and for them.

This distinction lies at the heart of MANAV Vision’s pillar on National Sovereignty, which holds that digital assets, including data generated by rural farmers, small traders, and daily wage workers, belong to those who create them. ‘Whose data, their right’ is not only a slogan. It is a structural claim about who should benefit from the value that AI systems extract from human activity.

The ‘inclusivity stack’ vs. the ideal user

There is a quiet assumption embedded in most AI systems developed in high-income contexts: that the user is literate, screen-comfortable, stably connected, and operating in a major world language. Call this the ‘ideal user‘ template. The problem, of course, is that the majority of the world’s population does not fit it.

The MANAV summit confronted this head-on with the concept of an Inclusivity Stack, a technical framework explicitly designed for the ‘non-ideal user‘. In practice, this means prioritising voice-to-action interfaces that allow a person to navigate complex public services (accessing government benefits, filing a complaint, checking land records) through their local language, without ever needing to read a form or tap through a menu. It means designing for intermittent connectivity, low-end devices, and the kind of fragmented digital literacy that characterises millions of first-generation smartphone users.

This is not a niche technical problem. It is the central design challenge of AI governance in the majority of the world. As anyone who has spent time observing technology deployments in schools or rural health clinics can attest, the gap between the solution as designed and the reality on the ground is almost always wider than anticipated. The classroom lacks reliable electricity. The government portal times out on a 2G connection. The chatbot cannot parse a regional dialect. These are not edge cases. They are the norm for a substantial portion of humanity. The Inclusivity Stack is an acknowledgement that building for these users is not charity; it is a prerequisite for any AI system that claims to serve the public good.

The ‘authenticity label’ and the digital social contract

One of the more striking proposals to emerge from the summit was a call for ‘nutrition labels‘ for digital content, as speakers described them. The analogy is intuitive: just as consumers in a supermarket can read a label to understand what is in their food, citizens navigating the digital information environment should be able to verify whether the content they are encountering is real, synthetic, or somewhere in between.

The MANAV framework advocates for mandatory authenticity labels and watermarking for AI-generated content, a technical and regulatory layer designed to help open societies maintain what the summit called the ‘Digital Social Contract’. In countries where institutional trust is fragile, where misinformation spreads rapidly through WhatsApp chains and local-language social media, and where the line between satire and incitement can have real-world consequences, the stakes of synthetic content are not abstract. They are electoral, communal, and sometimes violent.

This proposal also connects to the framework’s emphasis on valid and legitimate AI systems, the principle that a system must be verifiable and lawful before it is deployed at scale. Too often in the history of technology, governance has chased innovation rather than shaped it. The authenticity label approach represents an attempt to flip that dynamic: to build trust mechanisms into AI infrastructure from the beginning, rather than retrofitting them after the damage has been done.

The messy reality

Any honest account of the summit must also reckon with its contradictions, and there were several worth noting.

On the day of the event, registration systems crashed under load, creating long queues and frayed tempers at the entrance of a conference dedicated to seamless digital futures. In a detail that felt almost too symbolic to be true, some transactions at the venue were cash-only. These are small logistical failures, but they are instructive. They are a reminder that the distance between a compelling vision and a functioning system is measured not in policy documents, but in implementation capacity, institutional coordination, and the unglamorous work of making things actually work.

More pointed was the ‘fake robot dog‘ controversy that surfaced in the margins of the summit. A university had apparently showcased a Chinese-manufactured robot as its own domestic innovation, a rebranding exercise that was quickly exposed. The incident highlighted a deeper tension in any sovereignty-focused AI agenda: the gap between nominal innovation and genuine sovereign capability. Declaring data sovereignty is straightforward. Building the technical stack, the talent pipeline, and the institutional infrastructure to put it to meaningful use is another matter entirely. The MANAV vision is admirably ambitious. But ambition without honest reckoning with this gap risks becoming performance rather than progress.

The right to be human

And yet, the core argument of the MANAV Vision remains powerful and necessary.

At a moment when the global AI conversation is dominated by a handful of large technology companies and a small number of wealthy states, the New Delhi summit made the case for a different kind of AI future. One grounded in the Sanskrit concept of Sarvajana Hitaya, welfare for all, that treats voice, local language, and low-bandwidth access as the starting point for design, and measures an intelligent system not by how efficiently it processes data, but by how meaningfully it expands human dignity.

There is a phrase that captures this orientation well: the ‘right to be imperfect‘. It is the idea that AI systems must make space for the full spectrum of human users, not just those who are highly literate, highly connected, and operating in the languages and contexts that dominate the training data. The billions of voices left at the periphery of the digital age are not outliers to be accommodated. They are, in fact, the majority.

The MANAV Vision, for all its aspirational language and summit-day stumbles, is pointing toward something that matters: a world in which the question we ask of any AI system is not ‘how smart is it?’ but ‘whose welfare does it serve?‘ In New Delhi in 2026, that question was placed on the table. The harder work of turning a five-pillar vision into functioning infrastructure, genuine accountability, and measurable improvement in the lives of those least served by the current order is just beginning.

The India AI Impact Summit 2026 took place in New Delhi. The MANAV Vision framework was presented as a multi-stakeholder governance blueprint for AI development in less-represented economies.